Human demonstration videos are a powerful way to program robots to do long-horizon manipulation tasks. However, translating these demonstrations into robot executable actions presents significant challenges due to execution mismatches in movement styles and physical capabilities.

Existing methods depend either on paired human-robot demonstrations or on visual similarities between the training and test domains. Humans often act swiftly, use both hands for manipulation, or even execute multiple tasks simultaneously, creating a mismatch in execution styles. This mismatch leads to misalignment between the human and robot embeddings, hindering direct policy transfer.

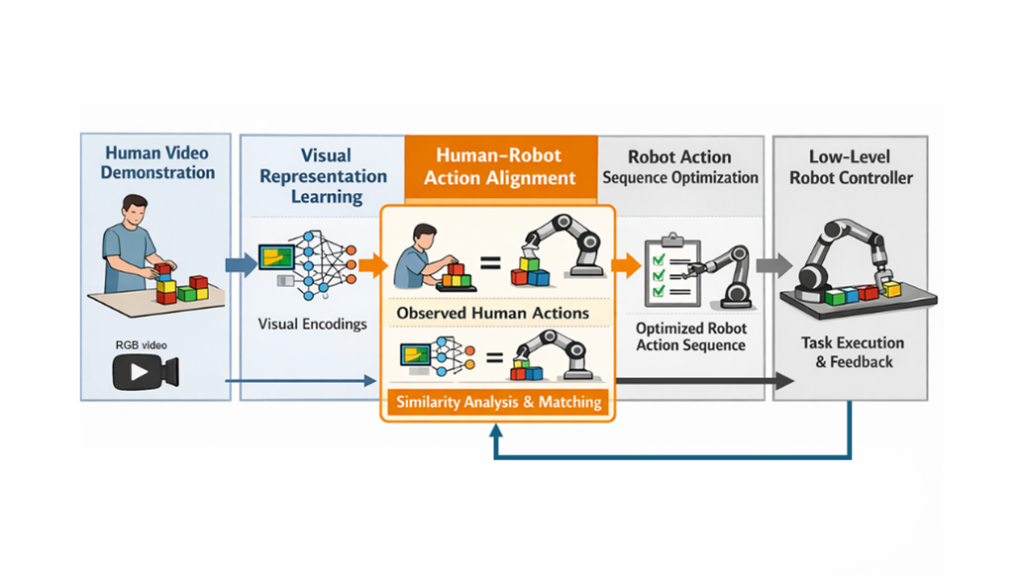

In this thesis, we want to explore the methods to find this equivalence between human and robot embodiments in several manipulation tasks. Once a human video demonstration is given, the robot actions are chosen as the ones that maximize a similarity score between the human-robot representation. The obtained action sequence is then tested on the robot, and corrections are made to improve accuracy in future trials.