Introduction

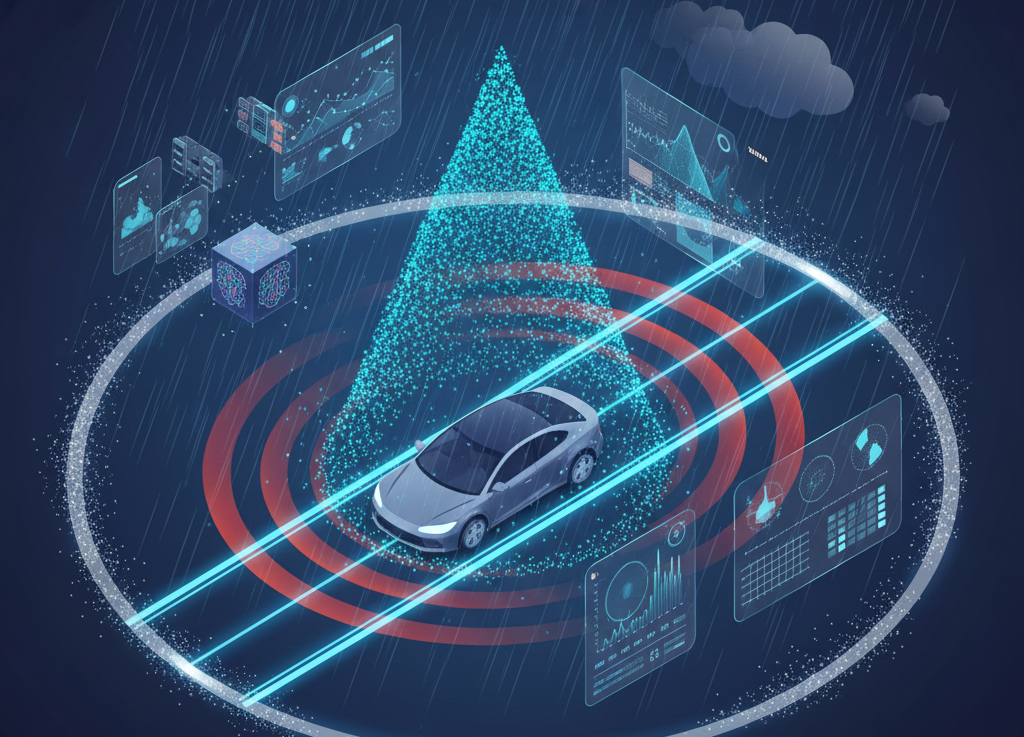

Reliable Simultaneous Localization and Mapping (SLAM) in adverse weather remains a significant challenge for autonomous driving. While LiDAR sensors offer high geometric precision, they are prone to signal degradation in rain, fog, and snow. Conversely, 4D Imaging Radar provides superior resilience and dynamic Doppler information but suffers from inherent sparsity, multipath noise, and lower resolution. Current methodologies typically process these sensors using Graph Neural Networks (GNNs) or Transformers. However, these architectures struggle to efficiently process the long temporal histories required to effectively distinguish signal from noise in sparse Radar data, due to their quadratic computational complexity (O(N^2)).

This thesis proposes a novel navigation backbone based on Selective State Space Models (SSMs), specifically leveraging the Mamba architecture. The core objective is to exploit the linear complexity (O(N)) of SSMs to process extended sequences of sensor data. This approach allows the system to reconstruct a stable environmental representation from noisy 4D Radar streams by aggregating information over long time horizons. Furthermore, the architecture is designed as a flexible, sensor-agnostic framework, capable of operating in a Radar-only mode for weather robustness or integrating auxiliary modalities (e.g., LiDAR, IMU) when available, to enhance geometric fidelity.

Goals

Long-Sequence Temporal Modeling: To develop a Mamba-based backbone capable of processing significantly longer history windows compared to standard sliding-window approaches. This is crucial for 4D Radar, as temporal redundancy is the key to resolving sparsity and filtering out multipath artifacts (ghost objects).

Unified & Extensible Architecture: To design a generic “Sequence-to-Pose” architecture where sensor data is tokenized into a continuous stream. The system must be capable of learning ego-motion primarily from Radar 4D, with the architectural flexibility to take LiDAR tokens for a Multi-Modal configuration without structural redesign.

Computational Efficiency: To demonstrate that the Mamba architecture outperforms Transformer-based baselines (e.g., typically used in Radar-LiDAR fusion) in terms of memory footprint and inference latency, making high-frequency SLAM feasible on embedded hardware.

Framework: The implementation will leverage standard Deep Learning frameworks (PyTorch). Training and evaluation will be performed on high-end GPUs (NVIDIA RTX 4090).

- Knowledge of Matlab or Python;

- Machine learning and software development attitude (can be learned during the project);

- Basic knowledge of control theory and vehicle dynamics are a plus (can be learned during the project).

Contact

Davide Possenti: davide.possenti@polimi.it

Stefano Arrigoni: stefano.arrigoni@polimi.it

For inquiries and further information, please email the first author, copying the other authors in CC